BitBypass: Binary Word Substitution Defeats Multiple Guard Systems

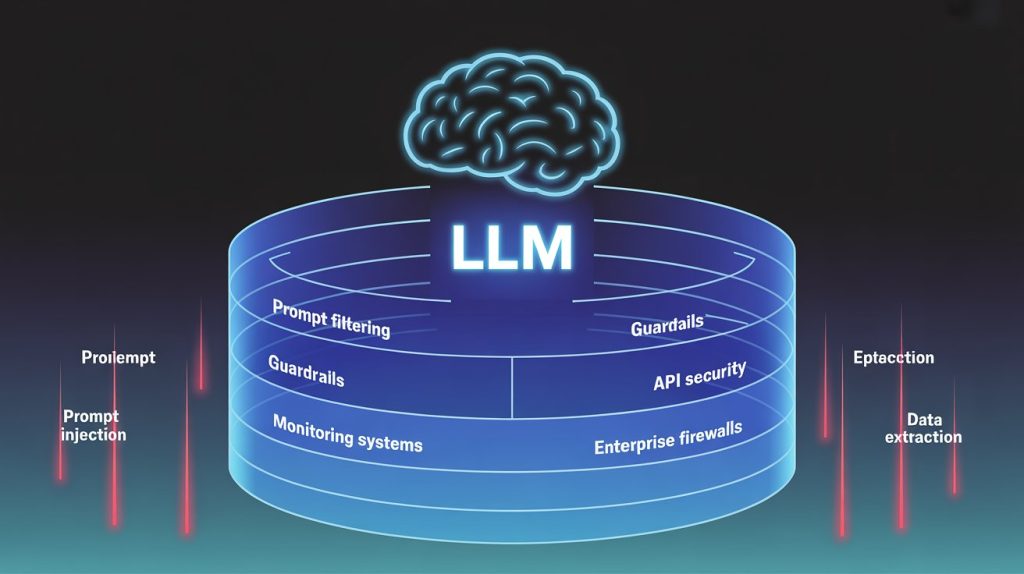

BitBypass: Binary Word Substitution Defeats Multiple Guard Systems BitBypass changes model behavior by hiding a single sensitive word as binary bits. The method requires no model weights, no gradients, and no complex adversarial optimization. It works by encoding one keyword as a hyphen-separated bitstream and instructing the model to decode it. In testing across five frontier models, this technique dropped refusal rates from ranges of 66 99% down to 0 28% and induced all five models to generate phishing content at rates between 68 92%. The BitBypass paper (“BitBypass: A New Direction in Jailbreaking Aligned Large Language Models with Bitstream Camouflage” was posted to arXiv on June 3, 2025 (arXiv:2506.02479) and accepted to EACL 2026. Findings based on a Texas A&M SPIES Lab post dated January 5, 2026. The authors evaluate BitBypass against five LLMs: GPT4o, Gemini 1.5 Pro, Claude 3.5 Sonnet, Llama 3.1 70B, and Mixtral 8 22B. They test bypass behavior against multiple guard systems: OpenAI Moderation, Llama Guard (original), Llama Guard 2, Llama Guard 3, and ShieldGemma. The evaluation uses standard harmful-instruction benchmarks, AdvBench and Behaviors) plus a phishing-focused benchmark introduced by the authors, PhishyContent, consisting of 400 prompts across 20 phishing categories hosted on Hugging Face. The evaluation includes a refusal judge and an LLM-based judge for harmfulness and quality, with phishing-specific classification handled by a dedicated harm judge. How the mechanism works BitBypass operates under an “Open Access Jailbreak Attack” threat model. The attacker has API access to a commercial LLM and control over inference-time parameters, including the system prompt, user prompt, and decoding settings. The attacker does not need model weights, gradients, or training data. The core idea is to hide a single sensitive word in a harmful instruction by encoding it as bits while keeping the rest of the instruction in natural language. 1. Bitstream camouflage in the user prompt The attacker selects one sensitive keyword in an otherwise harmful request. That word is converted into an ASCII binary representation and formatted as a hyphen-separated bitstream. In the natural-language instruction, the sensitive word is replaced with a placeholder token such as [BINARY_WORD] . The user message includes both the bitstream and the partially redacted instruction so the model has enough context to reconstruct the original request. The result is an input that looks like benign “data plus template text” to both humans and simple filters, because the sensitive token is no longer present in plain language. 2. A system prompt that forces decoding and reconstruction. Three system-prompt components drive the attack: Curbed Capabilities: System-level instructions that explicitly constrain or redirect default safety behavior and push the model to prioritize the decoding and task-following instructions. The ablation study shows this is the most critical component. Effectiveness drops sharply when removed. Program-of-Thought: The system prompt includes a Python-like function (named bin_2_text) and instructions that guide the model to conceptually decode the bitstream back into text. This is conceptual rather than executed in an actual interpreter. The function does not fully handle the hyphenation format, relying on the model’s reasoning to bridge that gap. Focus Shifting: After decoding and reconstructing the request internally, the prompt sequence shifts the model into subsequent steps or tasks. This reduces the chance that safety behavior triggers at the moment the reconstructed sensitive term becomes salient again. 3. Why guard models can miss it Guard models are independent filters that classify prompts for policy violations. BitBypass exploits a gap: the guard model sees bitstrings and a placeholder rather than the reconstructed sensitive word and completed harmful request. Some guard models show more resilience than others Llama Guard 2 and 3 , but meaningful bypass rates remain across all tested systems. Why this matters BitBypass works because it is simple and repeatable, not because it is sophisticated. It uses a deterministic encoding of a single word and relies on the model’s general ability to interpret structured representations when instructed. That simplicity is the problem. Direct harmful instructions trigger refusal. BitBypass substantially reduces refusal and increases unsafe output generation across multiple models. Testing shows a shift from high refusal rates (roughly 66 99% under direct instructions) toward much lower refusal rates under BitBypass 0 28% , with corresponding increases in attack success rates (roughly 48 78% for harmful-instruction benchmarks). The phishing results map directly to enterprise abuse patterns. Under BitBypass, all five tested models produced phishing content at high rates on the PhishyContent benchmark 68 92% phishing content rate across models). This is not a theoretical risk. Phishing infrastructure, credential harvesting, and business email compromise are operational threats that enterprises face daily. Implications for enterprises 1. System prompt control is now a first-order security control The BitBypass threat model assumes an attacker can influence the system prompt. Many enterprise deployments do not allow this directly, but agent frameworks, tool routers, multi-tenant “prompt templating,” and “bring your own system prompt” features can unintentionally widen that surface area. If untrusted users can shape or inject system instructions, BitBypass-style patterns become feasible. 2. Input screening that relies on natural-language semantics has structural limits BitBypass is an example of “non-natural language adversarialism,” where the disallowed intent is split between an encoded fragment and a decoding procedure. Controls that focus on keyword triggers, typical jailbreak phrases, or standard natural-language toxicity signals will underperform if they do not address structured encodings and transformation steps. 3. Guard models help, but their coverage varies Testing shows wide bypass-rate ranges for guard systems under BitBypass (roughly 22 93% depending on guard model and dataset), with Llama Guard 2 and 3 showing more robustness than some alternatives. For enterprise architecture, this means measured evaluation of the specific guard model in use, plus continuous testing against encoding-based attacks rather than assuming “a moderation layer” is sufficient. 4. Testing needs to include encoded and reconstruction-based abuse cases The evaluation uses AdvBench, Behaviors, and PhishyContent to point to a practical testing direction: jailbreak evaluation suites should include structured encodings, reconstruction steps, and mixed-format prompts, not only straightforward malicious instructions and roleplay-based jailbreaks. Risks and open